Hi @Allan_Zimmermann @Allan_Private , OpenRPA is consuming very high memory if it’s continuously running for around 1 hour. Another scenario is, if first process is running for 30 minutes and second process is running for 30 minutes(after 2 hours), still it consumes very high memory. Because of this, most of the time our process is getting stuck. OpenRPA requires a restart to resume the process. Requesting for your assistance to handle this issue.

OpenRPA does not in it self use a lot of memory after 30 minutes or 2 hours. ( unless there is a bug, then please create a small workflow to reproduce the behavior so I can get it fixed )

First of all make sure to NOT have any designers open while running in production, the workflow designer requires significantly more ram due to the way it caches stuff and all the debugging code and recompiling in the background to allow for debugging.

If you already did this, make sure you are not just sending it all as arguments ( like parsing a datarow/datatable this will at minimum double your ram usage since it needs to serialize the data and keep it in memory for each workflow )

Secondly, if you need to work with high amounts of data, make sure to split up you workflow in the smaller units and enumerate over the data so you do not need to load and work with it all at the same time ( maybe work items is a good use case for you ? )

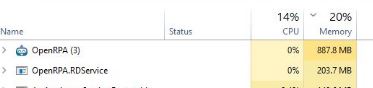

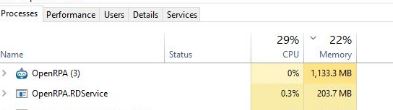

Average process(Main) workflow size is 150 KB. Highest size is 230 KB. Please recommend the best size. There is no bug in the flow. Designers are disabled in production(We enabled IsAgent in settings file). We are using queue mechanism in our project. Please find the attached images for memory consumption of before run(887.8 KB) and after run(1133.3 MB) of OpenRPA(15 mins run). The memory will go higher if I run another process. Please advice how to handle this.

I don’t doubt it’s using a lot of memory, I’ve seen installations where it uses 20 gigabyte.

You need to look at your workflow and figure out what is consuming memory and see how that can be optimized. I cannot guess what that is, but once you locate what part of your workflow that is allocating a lot of ram, I’m more than happy to help you come up with alternative methods of dealing with that data ( if possible )

We have 13 workflows(average of 150 KB XAML files) which will be called sequentially. Each workflow has 15 arguments with data type of String with 50 chars limit. Do we need to make any action to release those arguments after the run of each bot?

No, the most common problem I see is how people use data tables, or they create huuuuugge workflows that is designed to run for a long time enumerating over something.

There is one orther thing thou. OpenRPA will save the “workflow instance” in memory until it has been saved to the database and openflow. If you are running many small workflows in a short period of time, it can take up to 15 minutes for those instances to be released.

You can disable that in a few ways, ( mentioned here )

With skip_online_state it only saves it to the local database, but it still keeps it in memory for up to 15 minutes. Or you can complelty skip saving state by also setting disable_instance_store to true

See if that helps on your memory usage and if so, see if you can live without the comfort of being able to use state machines across restarts and statistics.

We tried both options by making the changes in settings.json. But there is no luck. Please suggest if there is any other options.

Then you need to go back and look at your workflows and see how yo can minimize the memory footprint. I already gave you pointers on the things I would look for.

[Edit] I accidentally hit a macro key.

I’m facing the same issue with a long running process. What I cannot understand @Allan_Zimmermann is why the memory is not freed once the workflow stops running? If I am somehow accumulating objects because of some code flaw, that are not freed by GC while running, how come they are not freed once the workflow stops? Then if I run another project afterwards, OpenRPA is just adding on to the memory consumption from a finished workflow…

I’m getting more and more reports about this.

Weird that it pop’s up after many months with no update to openrpa, but clearly something is going on.

I’m doing some debugging with another customer, who can easily reproduce this, so will see if that gives me a hint …

That sounds great. I tried to do a smaller workflow to reproduce it, but it was difficult making something with the same characteristics as what I have in production. So happy to hear there is someone else with a reproducible case ![]()

I found a bug in my code, that would make it not release the history of old workflows.

I had an issue in the exact same code before so just to avoid more issues i’m just removing all completed workflow instances, once they have been saved

Please test and see if this new version fixes your memory issues too